Why AI Gets Your Hotel Facts Wrong (and How to Fix It)

In late January 2026, tourists started turning up at the Weldborough Hotel in north-east Tasmania asking for the hot springs. The hot springs do not exist. An AI-written travel article on a tour operator's site had invented "Weldborough Hot Springs" as one of seven thermal experiences worth a detour. The local river is freezing, the pub serves beer, the owner Kristy Probert had to start telling coach groups of twenty-four mainlanders that they had driven three hours for a fiction.

The pub did not write the article. The pub did not put the words on the internet. The pub is dealing with the operational consequences anyway. Somebody else's machine wrote something about a hospitality business, published it as travel advice, and the team on the ground handled the consequences.

Weldborough is one named case. The wider pattern is harder to ignore. Hotelworld AI's World's Best at AI Index, released February 2026 across 2.36 million data points and 2,105 hotel brands, found that nearly half of hotel brands are being misrepresented by AI. It is not neutral academic research, but it is directionally useful: the methodology is published and the finding lines up with the rest of the work the industry has put out this year.

Where AI Actually Reads From

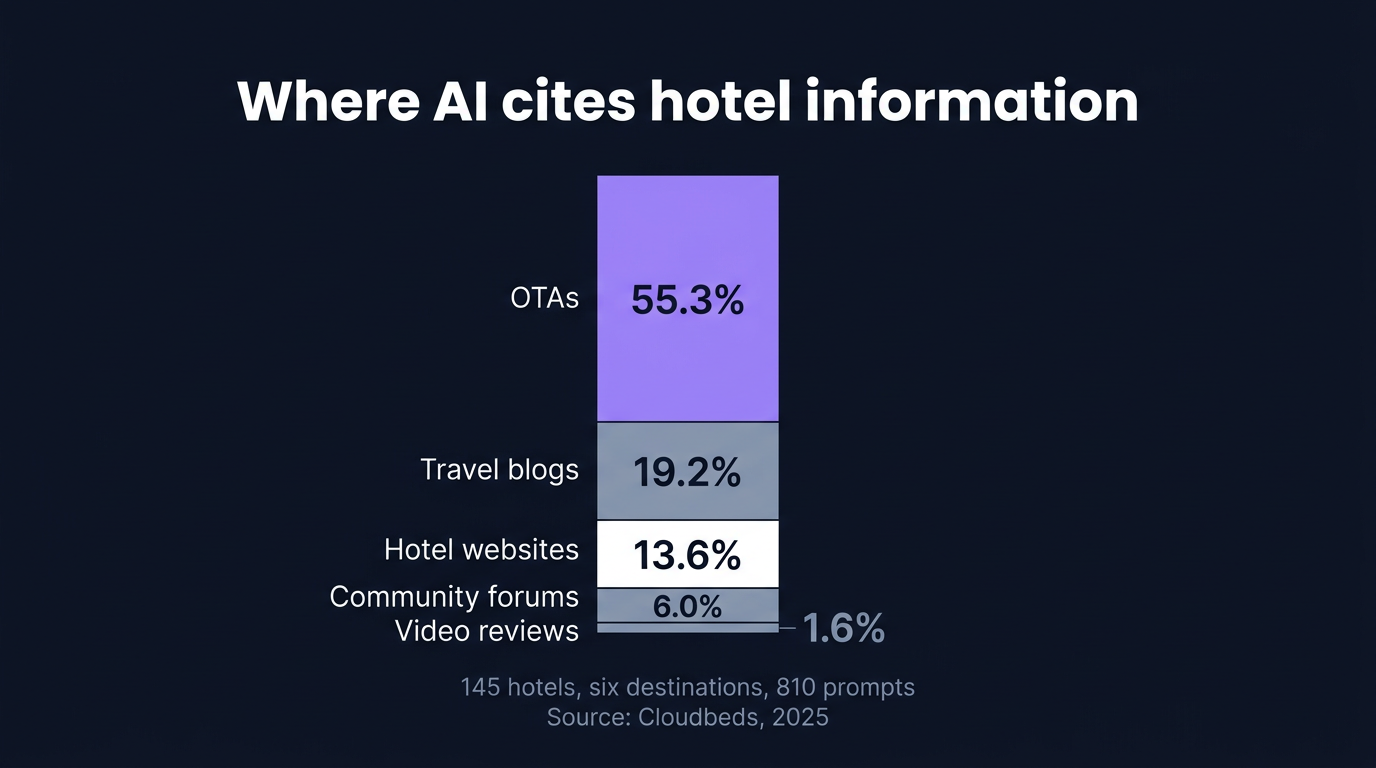

AI assistants cite the hotel's own website roughly 14% of the time. OTAs supply more than half of every answer.

Cloudbeds analysed 145 top-ranked hotels across six destinations using 810 prompts against ChatGPT, Gemini and Perplexity in 2025. OTAs supplied 55.3% of the citations, with Booking, Expedia and Tripadvisor in the lead. Hotel websites accounted for 13.6%. The rest came from travel blogs, community forums and video.

Worth separating two things here, because they get conflated. Where the AI learns the facts and where it sends the visitor are different layers. AI answers often learn hotel facts from OTAs and third-party surfaces, even when the final booking link points direct, as our work on direct-link share for ChatGPT showed. The model may send a traveller straight to your site, while the facts it used to recommend you came from Booking.com, Tripadvisor, Reddit or an old travel blog.

When the AI answers "best boutique hotel in Lisbon with a rooftop", it does not load your homepage. It assembles a handful of documents from a retrieval layer and synthesises. Your homepage is one of those documents at best. The OTA listing, the Tripadvisor thread and the travel blogger's 2024 round-up usually outweigh it.

Five Ways AI Misrepresents Hotels

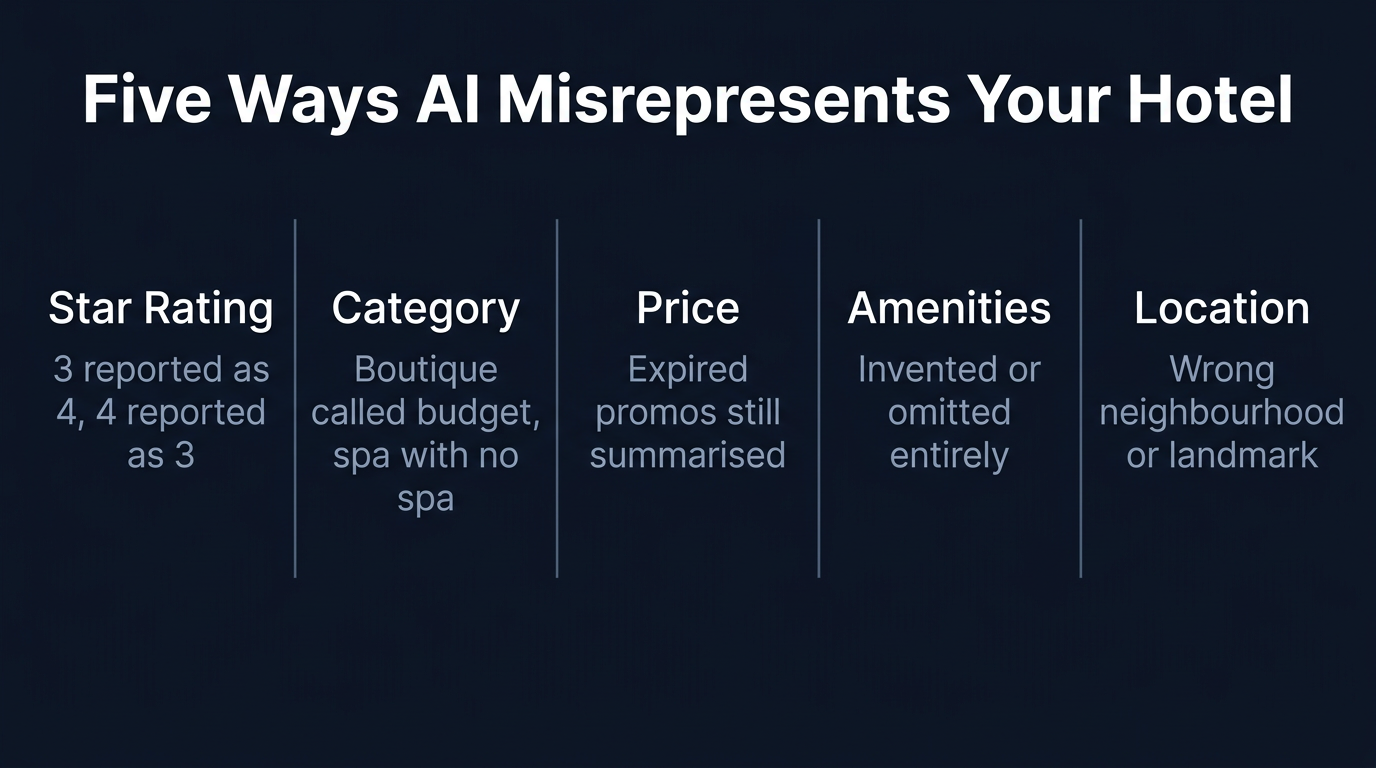

The same misrepresentation patterns recur across the studies and the operator anecdotes worth reading from this spring.

Star rating drift. HotelRank's February 2026 anatomy study flagged hotels listed as 3-star on Google Business Profile when the property is actually 4-star, and the reverse. A separate ChatGPT Agent booking test reported a hotel as four stars when it was actually three. The category is hardly cosmetic. A revenue manager who has spent two years repositioning a hotel from upper-midscale to upscale is silently undone when ChatGPT compares the property against its actual peer set using stale rating metadata.

Category misclassification. A boutique hotel called "budget", a spa property whose spa never gets mentioned, a beachfront hotel filed under city-centre, a luxury independent grouped with chain economy. Cloudbeds documented that chain properties dominate at the recommendation level (72.4% of recommendations), often because the AI files independents into whatever generic bucket their thinnest source describes.

Outdated price and offer information. A spring promo that ran in March, was never removed from the offers page, gets summarised in May. The user clicks through expecting £180 per night, the live booking engine shows £290, they close the tab. We see this regularly in scans, especially where a marketing team owns the offers page and a revenue team owns the booking engine. The AI is not lying, it is reading what is still on the page.

Amenity hallucination and omission. Two failure modes here. The AI invents an amenity, often a spa, a rooftop or airport transfer, or it misses one that genuinely exists. The miss is more common and more expensive. HotelRank's schema adoption study found that 92.3% of hotels with JSON-LD are missing the amenityFeature field entirely. If the AI cannot find a structured claim about your amenities on your site, it borrows one from the OTA, which is sparse by design.

Location and neighbourhood drift. With no canonical geo coordinates on the hotel's own site, the AI defers to whichever OTA or Google Business Profile entry it trusts more. The hotel two blocks from the Roman amphitheatre gets described as "near the train station." Both are technically true. Only one helps you sell.

Why It Happens

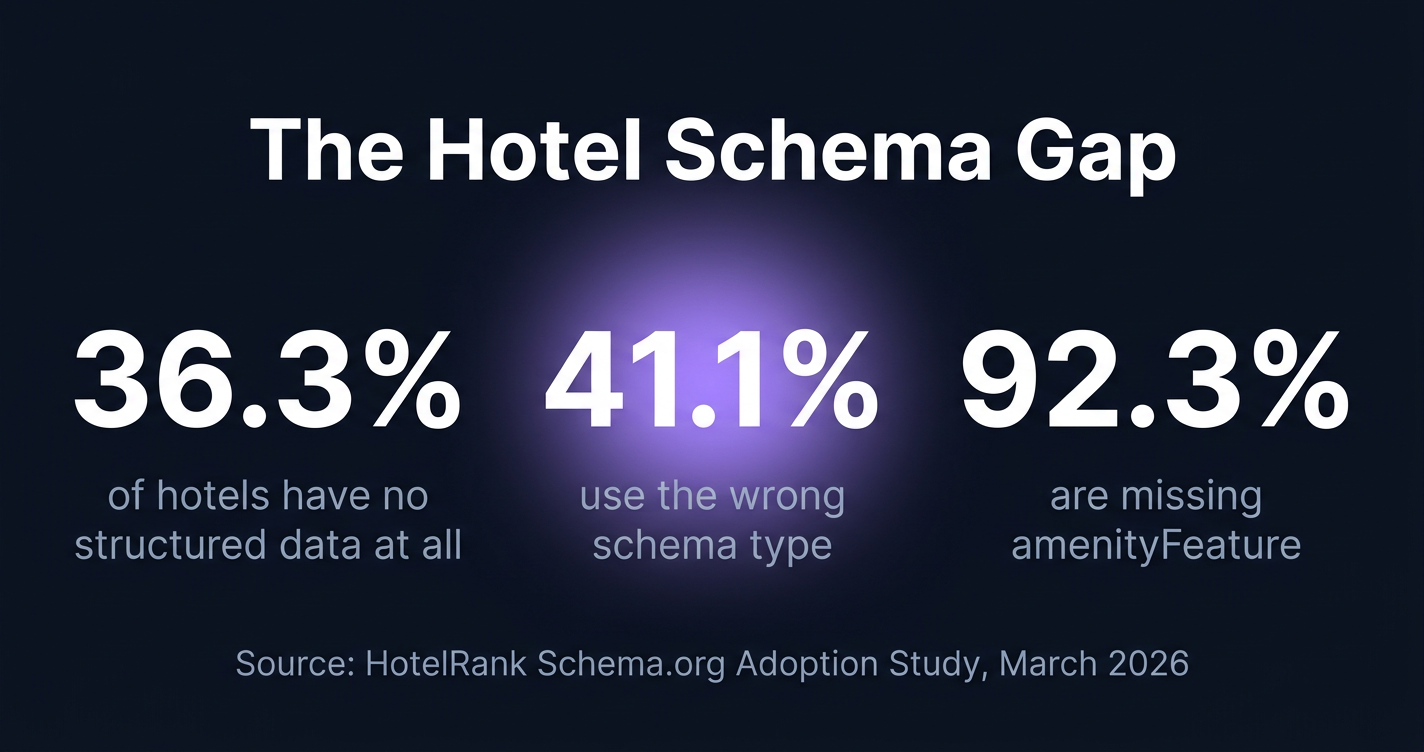

The cause is structural, not a content problem at any single property. OTAs publish more machine-readable hotel content than hotels do and refresh it constantly with live inventory. Hotels publish less of their identity in structured form. HotelRank's schema adoption study found that 36.3% of hotels have no structured data at all, and 41.1% of the ones that do are using the wrong schema type, typically Organization or LocalBusiness rather than Hotel or LodgingBusiness.

When sources disagree about the same property, models tend to either pick whichever source they trust more or, in Perplexity's case, trim the citation altogether when consistency is low. Inconsistency between your website, your Booking page, your Tripadvisor profile and your Google Business Profile does not always produce a confused mention. Often it produces silence. The hotel that named its property differently across six listing platforms five years ago is paying that price now in invisibility rather than in a wrong mention.

How to Fix It

Make the hotel website the most precise public source about the hotel. Publish a full Hotel or LodgingBusiness JSON-LD block with explicit starRating, geo coordinates with latitude and longitude, full amenityFeature list, priceRange, and description that names the property in the same string used on Booking and Google Business Profile. The amenity grain matters. "EV charging" on the page is not the same as amenityFeature: Tesla Supercharger Level 3 (250kW) in your structured data, and the model that fields a Model Y driver's "where can I supercharge overnight in Lisbon" prompt is reading the second version, not the first.

Audit entity consistency across every public surface. Hotel name spelled identically, down to capitalisation and any trailing "Hotel". Star rating consistent across the website, Booking, Expedia, Tripadvisor, Google Business Profile, Bing Places. Address in the same format. Latitude and longitude consistent. Amenity vocabulary aligned rather than paraphrased differently per surface. Divergences here tend to cost citations rather than just confuse them.

Review what the AI is currently saying, weekly. Most hotel teams have never asked the question directly. Open ChatGPT, Perplexity and Gemini. Ask each one to recommend a hotel in your category, in your city, near your nearest landmark. Read the reply slowly. The gap between what the AI says and what you actually offer is the work list. The 5-minute walkthrough is in our hotel AI visibility check; the rationale for why structured data carries the weight is in the hotel schema markup guide.

Stiplo Mystery Shop

Stiplo Mystery Shop was built so that the GM does not have to wait for a coach group of twenty-four to arrive at the door before realising the AI has rewritten the property's amenities.

We run a digital mystery shop against the AI surface as well as the website. We ask ChatGPT, Perplexity and Gemini what they say about your property, extract every factual claim, and cross-reference it against your live site, your structured data, your OTA listings and your Google Business Profile. The output is the list of misrepresentations, where each one is coming from, and the fixes ranked by reach.

If you do one thing this month, audit your homepage JSON-LD against the three numbers in that schema graphic above. If you do two, walk one ChatGPT conversation about your property and write down every claim it makes that does not match what is on your site. Those two exercises will surface most of the next quarter's work. Run a free digital mystery shop for one property.

Frequently Asked Questions

Can a hotel actually correct what AI says about it?

Not directly. There is no edit button in ChatGPT. In practice, the fastest single lever is filing the correction in Google Business Profile, because GBP propagates to Gemini quickly and feeds the wider web. Submitting the corrected page through Bing Webmaster Tools is sensible hygiene for ChatGPT and Perplexity visibility, but treat it as crawl acceleration rather than a guaranteed correction mechanism. None of this works without the underlying structured data on the property's own site being consistent with the corrections you are filing elsewhere.

How long does it take for AI to update hotel information after a change?

Update speed varies sharply by model, query type and crawl frequency. For live-web answers, changes can sometimes appear within days, but there is no guaranteed window. Branded hotel queries usually move faster than generic destination queries because the system has clearer entity intent. Gemini moves fastest when the change is made in Google Business Profile and slower when it lives only on the hotel website. Perplexity tends to be the most live of the three for branded queries. You are outweighing older sources, not pushing a single update.

Why do ChatGPT and Gemini say different things about the same hotel?

Different retrieval layers. ChatGPT pulls from Bing and its own crawler, with growing first-party integrations to Expedia and Booking via OpenAI's app platform. Gemini pulls from Google search and Google Business Profile, with YouTube weighted into video-rich queries. Perplexity blends a proprietary index with Google and Bing and overweights review aggregators. The same hotel can look strong on one and invisible on another for the same week.

Is this a brand-safety problem or a revenue problem?

It depends on the misrepresentation. A wrong star rating or wrong neighbourhood is mostly a revenue problem; the AI is quietly steering qualified guests elsewhere. An invented amenity or attraction is mostly a brand problem; the arrival contradicts the booking promise. Weldborough is both at once. An attraction that does not exist sent real guests to a real pub which then had to manage their disappointment.

Want to see how your hotel website performs?

Ghost Scan checks 30 pages for commercial integrity issues in minutes.

Run a free Ghost Scan